Performance Benchmarks for High Availability

Sonatype IQ Server High Availability (HA) installations may vary based on the organization's needs. This section provides performance metrics for IQ Server HA to guide scaling decisions based on your performance requirements, runtimes, and cost.

We have thoroughly tested and verified the functionality and performance of the IQ Server with the named third-party tools, technologies, and platforms mentioned in this section. Using other technologies and platforms may not result in the same outcomes and are not supported.

Simulation

Scan application used: webgoat binary scan.

Simulation approach: Simulated multiple policy evaluation requests per minute, against multiple IQ applications in 20 minutes.

SubmitScan: Submits the scan.xml.gz (of webgoat app) to the performance environment using the endpoint /rest/integration/applications/{applicationName}/evaluations/cli/stages/build

CheckEvaluationStatus: Check the status of the evaluation of each submitted scan every 1 second

Performance Benchmarks

3-Nodes in the EKS Cluster with Java optimization

Instance class: m5d.2xlarge

No of instances: 3

Instance type: AL2_x86_64

K8s version: 1.23

Instance class: db.m5.4xlarge

Allocated storage: 50 GB

Engine: PostgreSQL

Version : 13.7

1 EFS drive

ALB configured

SSL enabled

External DNS configured

Java optimization using iq_server.javaOpts="-Xms24g -Xmx24g"

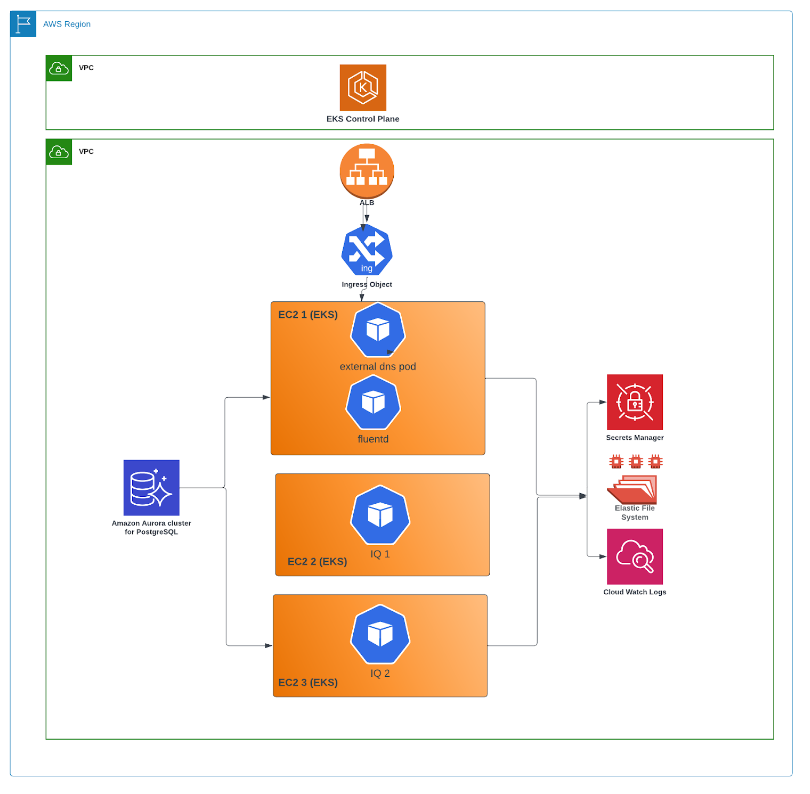

Reference Architecture

|

Policy Evaluation Performance Benchmarks

Policy Evaluations Requests per Minute (RPM) | Scans Performed (within 20 minutes) | Failed Scans | Average Duration (in seconds) | Maximum Duration (in seconds) |

|---|---|---|---|---|

60 (8x* mode) (86,400 per day / 604,800 for 7 days) | 1200 | 0 | 8 | 17 |

120 (16x* mode) (172,800 per day / 1,209,600 for 7 days) | 2400 | 0 | 10 | 21 |

* x refers to 7.5 policy evaluations per minute (10,800 per day/75,600 for 7 days)