SBOM Scorecard

Note

The visualizations described on this page have been sunsetted in IQ Server/Lifecycle release 170.

Please refer to Integrated Enterprise Reporting for the latest version of Data Insights, available from release 171 onwards.

The Software Bill of Materials (SBOM) Scorecard is a visual representation of the quality of component upgrade decisions made by your Java development teams. The goal of the SBOM Scorecard is to prompt discussions about component upgrade decisions in your organization.

Note

The SBOM Scorecard only evaluates Java applications.

Accessing the SBOM Scorecard

Log in to your instance of Sonatype Lifecycle.

Select Data Insights from the left navigation menu.

From the Data Insights landing page, select SBOM Scorecard.

If you see an error message like the one below, it means that Lifecycle hasn't collected enough data to generate an SBOM Scorecard.

|

Reading the Scorecard

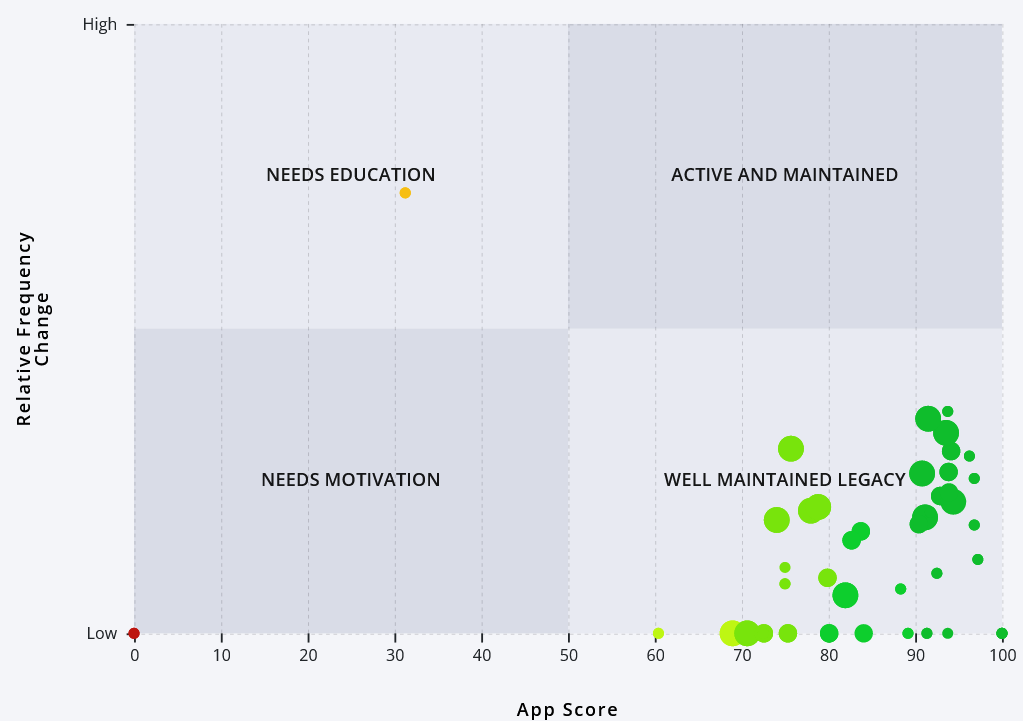

The SBOM Scorecard is presented in a four-quadrant grid, like in the example below.

|

The Quadrants

The SBOM Scorecard has an X-axis and a Y-axis and is divided into four quadrants. The X-axis measures your apps based on the quality of their component upgrade decisions, and the Y-axis measures your apps based on the frequency of their component upgrades. Apps in the left-most quadrants are seeing a disproportionate amount of low-quality upgrade decisions when compared to their counterparts in the right-most quadrants.

Needs Motivation: apps with components that are upgraded less frequently, and whose upgrade paths are less-than-optimal.

Needs Education: apps that are upgraded frequently, but whose upgrade paths are less-than-optimal.

Active and Maintained and Well Maintained Legacy: apps that are making good component upgrade decisions.

Though it may seem obvious, it's important to acknowledge that the quadrant names are somewhat subjective. Lifecycle does not understand your apps in the context of your development budget, business goals, developer talent pool, and so on.

Bubbles and Scores

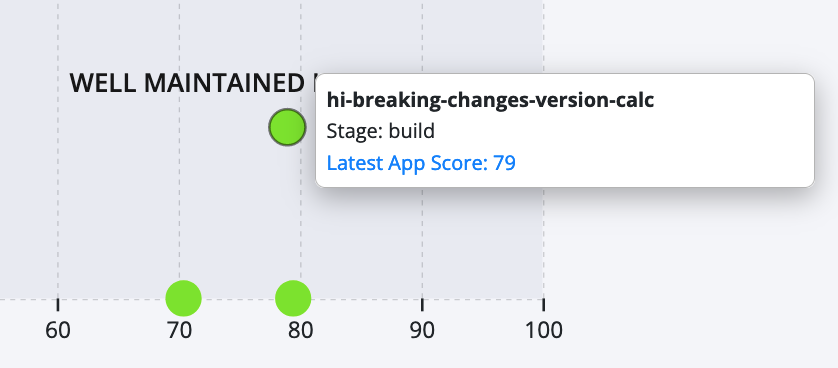

Each bubble represents a scanned Java app. Hovering over the bubble shows you the application name, stage, and latest app score.

|

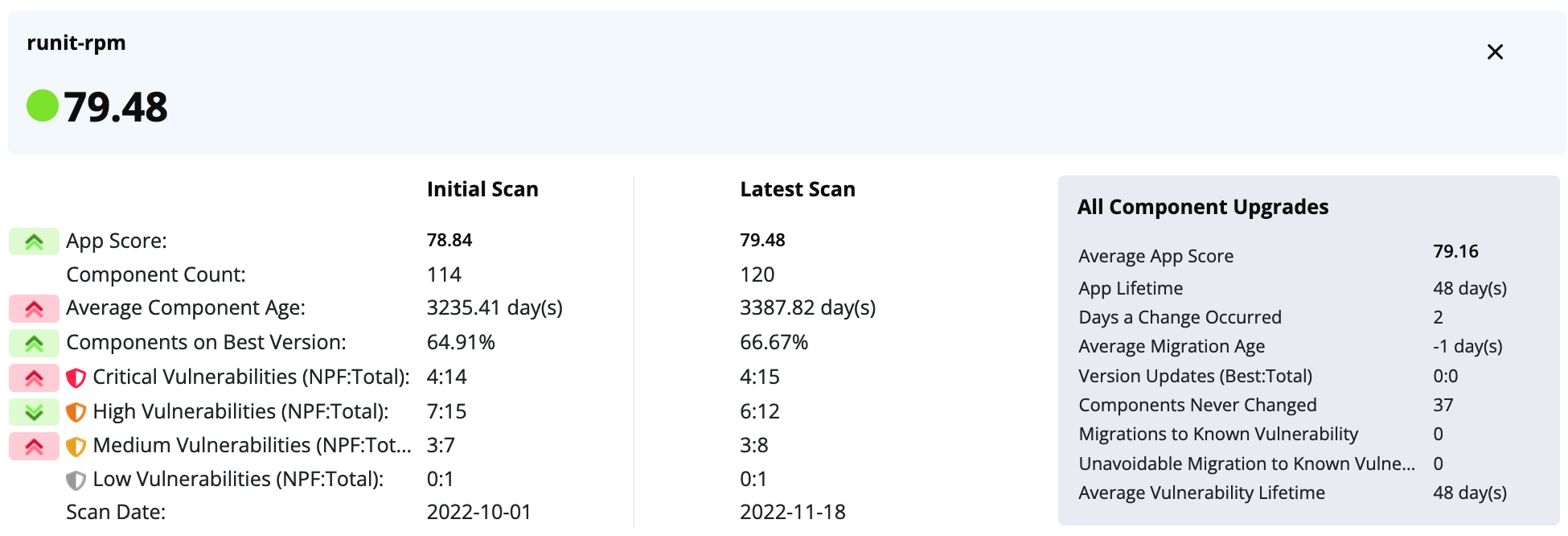

Click on a bubble to see more details on your initial scan, the latest scan, and data on all component upgrades.

|

The arrows on the left show you the difference between the initial and the latest scan (you want the arrows to be green). The information under All Component Upgrades represents how you got there.

The idea is to provide enough information for you to identify behavior changes and start a conversation about what is happening. It's all about actionable direction.

The rest of the values are as follows:

Value | Meaning |

|---|---|

App Score | The app's score on the latest scan. See Understand How Apps are Scored for details. |

Component Count | Number of identified components |

Average Component Age | The average age of the bill of materials on this scan date. |

Components on Best Version | How many components in the application are sitting on the best version |

Critical Vulnerabilities | The count of application components that suffer from critical vulnerability, i.e., a CVSS score >= 9.0. NPF defines how many are “No Path Forward” or the last version is vulnerable. |

High Vulnerabilities | The count of application components that suffer from high vulnerability, i.e., a CVSS score >= 7.0 and < 9.0. NPF defines how many are “No Path Forward” or the last version is vulnerable. |

Medium Vulnerabilities | The count of application components that suffer from medium vulnerability, i.e., a CVSS score >= 4.0 and < 7.0. NPF defines how many are “No Path Forward” or the last version is vulnerable. |

Low Vulnerabilities | The count of application components that suffer from low vulnerability, i.e., a CVSS score < 4.0. NPF defines how many are “No Path Forward” or the last version is vulnerable. |

Scan Date | The date of the scan |

Average App Score | The application score averaged across all scans. |

App Lifetime | The number of days the application has been a part of this Data Insight. |

Days a Change Occurred | How many distinct days the application BOM was changed. Application BOM is defined as identified components. Consider this an activity metric of how many times the team worked on the SBOM. |

Average Migration Age | The average age of the upgraded version when an upgrade occurred. This metric measures how crusty the components being chosen are. |

Version Updates (Best:Total) | Number of times the best version was chosen compared to the total choices. For example, (10 : 76) out of 76 migrations, the best version was chosen 10 times |

Components Never Changed | The number of components that were never upgraded or removed since we have been monitoring this application. |

Migrations | How many version upgrades were made. One component updated ten times would count as ten. |

Migrations to Known Vulnerability | How many times a version was migrated to that had a known vulnerability |

Unavoidable Migration to Known Vulnerability | Number of times a version was migrated to that had a known vulnerability, but that was the only choice. No path forward migration. |

Average Vulnerability Lifetime | How long a vulnerability exists in this application. |

Understand How Apps are Scored

Because the SBOM Scorecard is actively improved, the specific mechanisms for scoring your apps are constantly being refined. To understand your Scorecard specifically, keep in mind the following eight rules used to score migrations. These consist of four objective rules (shown in red) and four subjective rules (shown in purple).

|

These rules are based on our 2021 State of the Software Supply Chain report. Check out page 23 of the report to review those rules. To summarize:

Avoid alpha, beta, milestone, etc. releases

Don't upgrade to a vulnerable version

Upgrade to lower-risk versions whenever possible

If components are published twice in quick succession, choose the later version

Choose migration paths that others have chosen

Choose migration paths that minimize breaking code changes

Choose versions that the majority of your peers are using

All else being equal, choose the newest version

A BOM is scored every time a dependency is changed, removed, or upgraded. The application score is calculated as the average BOM score across all scans where a change in the BOM is detected. Individual component migrations are judged against other possible migration paths. For example, imagine you upgrade a component from its 1.0 to its 4.0 version, which is the very latest, skipping the 2.0 and 3.0 releases. In this scenario, there are four possible migration paths:

1.0→ 1.0 | 1.0→ 3.0 |

1.0→ 2.0 | 1.0→ 4.0 |

Your migration path is only being scored against these four possibilities. In other words, migration is not scored against a hypothetical best path, but only against actual paths you may have taken.

Workflows

The data in the SBOM Scorecard, and its usefulness to you, will depend a lot on the size, maturity, and business goals of your organization. However, here's a quick, simple workflow to get value from the analysis.

On a monthly basis, review the Scorecard and identify the apps in the Needs Motivation quadrant. Also, identify the development teams responsible for those apps.

If you need help identifying the development team, take the application name from the bubble in the scorecard, go to Orgs and Policies, search using the application name, select the correct app, and scroll down to the Access box to see the individuals and roles who have access to this app.

Schedule 5-minute meetings with those teams and ask some or all of the following questions:

"Is this a legacy app?"

"What's the mission criticality of this app?"

"Are there any formal or informal processes for upgrading components? What are they?"

"Are you (the developers) upgrading to the latest version every time?"

"Are you (the developers) using any Lifecycle integrations to inspect packages before consuming them?"

Document the call and record the answers. Use that information to distinguish between apps that can't improve and apps that can.

Now review the Scorecard and identify the apps in the Active and Maintained quadrant. Schedule 5-minute meetings, ask the same questions, and then document the call.

Compare and contrast the two groups of apps. Identify the processes you see in the Active and Maintained apps but don't see in the Needs Motivation apps.

Publicize your findings internally.

Regardless of what happens next, don't resort to scolding. Remember, component maintenance and hygiene is a messy, complicated subject. Instead, focus on the collaborative aspect. How can you enable teams on the left to move to the right?